Katie: If you work anywhere near technology today, you know the feeling. The information coming at you about digital security is it's less like a stream and more like a fire hose.

James: A high pressure fire hose.

Katie: Exactly. We have thousands upon thousands of new vulnerabilities reported every single year. And the sheer volume is overwhelming. You need to know not just what a vulnerability is, but how they're found and maybe most critically, how to prioritize the fixes.

James: And you needed that answer yesterday.

Katie: You really did. So our mission today is to give you that shortcut. We're doing a deep dive on modern vulnerability scanning, and we're using excerpts from a 2025 strategy guide that frankly moves past the basics and focuses on the advanced stuff.

James: Right, the prioritization and workflow techniques you actually need to survive this constant flood of risk.

Katie: OK, let's unpack this. At its core, what is vulnerability scanning?

James: Well, think of it as this automated process. Its job is to identify all your digital assets, figure out their attributes, and then start hunting for security weaknesses.

Katie: So things like software flaws or misconfigurations.

James: Exactly. Misconfigurations, missing patches. It's looking across everything you own. Networks, servers, applications. It really is the core engine that fuels your proactive defense.

Katie: And that engine analogy is perfect because this isn't some optional, experimental thing.

James: I'm not at all. This is foundational. Standard guidance, like from NIST, classifies this as a core security control. It's basic hygiene.

Katie: And what does that guidance really emphasize?

James: It really hammers on two things. First, you have to define the breadth and depth of coverage you're getting.

Katie: So are you scanning everything?

James: And how deeply are you scanning it? Second, and this is critical, it emphasizes using privileged access when you scan to get the most thorough results. Got it. But here's the crucial thing to get right out of the gate. Scanning is just one step. It's only the data gathering part. OK. It lives inside a much bigger vulnerability management lifecycle. You start with discovery and scanning, then you move to assessment, then comes that make or break step of prioritization, then remediation, and finally, verification.

Katie: Verification. So you have to check that the fix actually worked.

James: If you forget to verify, you haven't closed the loop. You've just created paperwork.

Katie: That lifecycle makes perfect sense. So let's pivot to the why. You mentioned the sheer volume of these things. Organizations can't just scan for compliance anymore, can they?

James: No, they have to scan to survive.

Katie: So what are the biggest drivers pushing them to scan more frequently and with more depth right now?

James: The strategy guide we're using lays out four huge ones. Number one is simple, risk reduction.

Katie: Just cutting your exposure to issues that are already out there.

James: Issues that are already known, publicly reported, and often already being exploited in the wild. Right. And tying into that, you have this goal of continuous visibility. This is mandated by modern frameworks. They require continuous vulnerability management.

Katie: So you can't just check once a quarter and feel safe.

James: No way. The attackers are moving way too fast for that.

Katie: But speaking of quarterly checks, that compliance pressure is still there, isn't it?

James: Absolutely. Compliance is still a massive driver. Things like the rules for payment cards, they still require external scans at least quarterly, often by certified vendors.

Katie: So that sets a minimum bar.

James: It's a minimum. But Modern Strategy says that minimum is just. It's no longer sufficient for your internal systems.

Katie: And the fourth driver.

James: Patch acceleration. Scanning gives you the data you need to prioritize, plan, and then verify your patch deployment across everything, IT, OT, cloud assets, all of it.

Katie: So scanning finds the target.

James: And the re-scan confirms the hit.

Katie: What's fascinating here is how the industry has responded to this volume problem. It feels like we've all realized that just relying on the raw severity score, the CVSS score, is a losing game.

James: It is a losing strategy. That score that tells you if something is a 9.8 or a 7.5, it just creates a prioritization nightmare. It's all based on potential, not reality.

Katie: That's the classic problem, right? Everything is a critical, so nothing is. So how do we move past that? Because that 9.8 score used to be the only thing we had.

James: We moved to what's called a multi-signal approach. Today, really effective prioritization needs three essential interconnected data points working together. You still need CVSS, of course.

Katie: OK, so that's your base technical severity.

James: That's your base. But then you have to look at EPSS, the Exploitation Prediction Scoring System.

Katie: And this is where the magic starts.

James: This is it. EPSS is an estimate, a probability, that a vulnerability will actually be exploited in the wild in the next 30 days.

Katie: So it filters out all those scary-looking 9.8s that nobody ever actually uses.

James: Exactly. So CVSS tells you how bad it could be if exploited, but EPSS tells you how likely it is that someone will actually exploit it.

Katie: That drastically changes the equation.

James: It does. But there's a third data point, and frankly, it's the most important one, and that's the CISA KV catalog.

Katie: The Known Exploited Vulnerabilities Catalog.

James: Think of it as the FBI's most wanted list for vulnerabilities. It tracks issues that are actively being abused by real world adversaries right now.

Katie: OK, wait, slow down. If something is in the KEV catalog, does its EPSS score even matter anymore?

James: Not really. KEV is the ultimate reality check. If it's in KEV, it means act now, full stop.

Katie: Because it's not theoretical anymore.

James: It's happening. The trend is clear. Attackers weaponize these things incredibly fast, often within days. So frequent standing, combined with the discipline to target KEV listed issues first, drastically shrinks that window of exposure.

Katie: That's the essence of modern prioritization, then.

James: It is.

Katie: It shifts the mentality from fix everything critical to fix the three things attackers are actually using today.

James: Precisely.

Katie: OK. So now that we know why this multi-signal approach is so vital, let's walk through the actual technical workflow. What are the core steps of a modern scanning run?

James: Well, the process starts with some clear administrative groundwork. The first step is scope and inventory.

Katie: You have to know what you have.

James: You have to identify every single asset. Cloud instances, containers, OT devices, everything. And this has to align with those breadth and depth expectations we talked about. You cannot protect what you can't see.

Katie: Right. If you miss that old server tucked in a corner, your scanner will never even know it exists.

James: Exactly. So once you have that inventory, the scanner does a discovery sweep. This is where it builds a fingerprint of the environment.

Katie: Live hosts, open ports, that kind of thing?

James: Yeah, what services are running, what versions they are, and only after that discovery can the detection phase begin.

Katie: This is where it actually looks for the vulnerabilities.

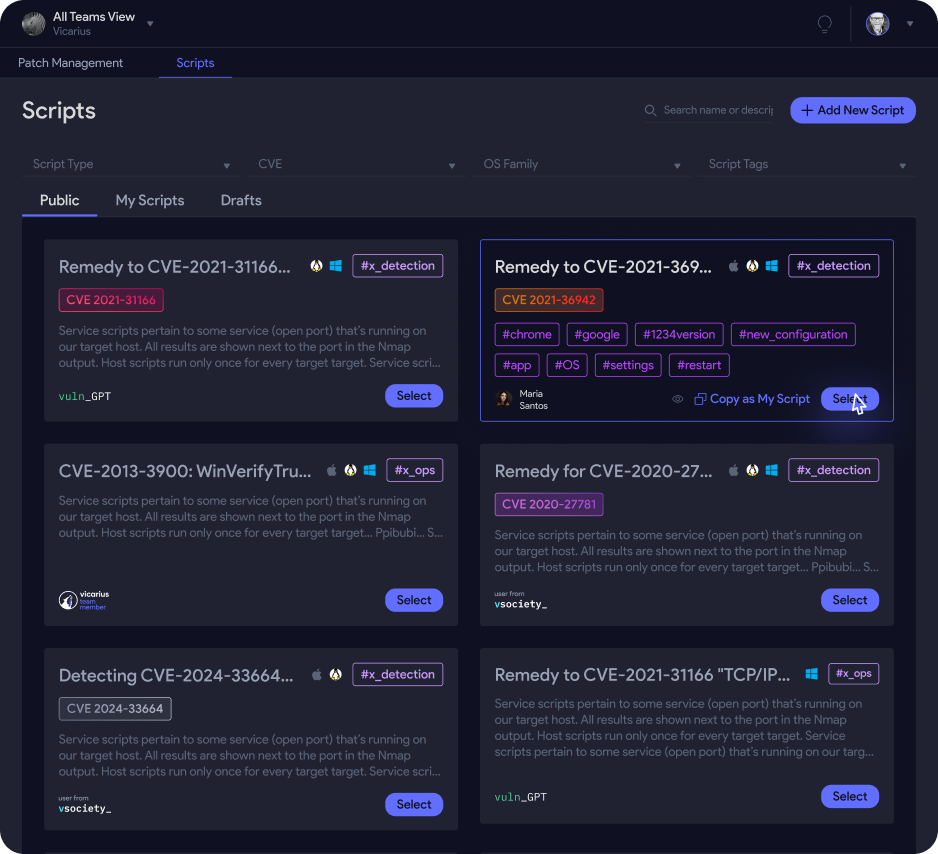

James: Right. The scanner takes those fingerprints, correlates them against all the external vulnerability intelligence, CVE data, NVD data, and runs its checks for missing patches, weak configs, you name it.

Katie: And this brings us back to that key recommendation from NIST you mentioned earlier, the access level of the scan. We're talking authenticated versus unauthenticated scans.

James: A crucial distinction.

Katie: But I have to ask, isn't setting up privilege access across thousands of servers a huge pain for IT teams? Is the payoff really worth that operational headache?

James: It is a significant operational challenge, you're absolutely right. But the payoff is immense. A credentialed scan uses host or application credentials to log right into the target system.

Katie: So it's on the inside.

James: It's on the inside. It can do deep internal checks, reading config files, auditing the registry, confirming patch levels with certainty. That thoroughness is why it's so strongly encouraged.

Katie: And an unauthenticated scam.

James: That's like rattling the front door. It can only see what's visible from the network. It's useful for a quick external sweep, but it often misses things inside the system. And crucially, it generates a ton of false positives.

Katie: Ah, because it can't actually confirm if a patch is there or not.

James: It can't. It's just guessing based on network banners.

Katie: So the benefit isn't just depth, it's accuracy. A credentialed scan cuts down on that false positive noise.

James: That's right. And that alone can save IT teams endless hours chasing ghosts. That noise reduction is often the number one reason people switch, because it improves the relationship between security and IT.

Katie: Now, beyond the network and servers, we have to talk about applications. That's where most modern breaches happen, right? Web apps and APIs.

James: That's where Dast comes in. Dynamic application security testing.

Katie: So Dast is critical because it's what it's actually interacting with the running application.

James: It's simulating a real attacker. It throws weird inputs at web forums or API endpoints, and it's looking for things like cross-site scripting, SQL injection, broken authentication, things that only show up when the app is actually running.

Katie: So if my developers used a static code analysis tool, a SAS tool, to check the code before deployment, DAST is like the final quality check on the live system.

James: That's a perfect way to put it. They complement each other. SAS checks the Blueprint. DAS checks the finished building. Got it. So once you have all this data from network infrastructure and DAS scans, you hit those back-end steps. Scoring and prioritization.

Katie: Using that multi-signal approach we talked about.

James: CVSS, EPSS, Kevief, then reporting and ticketing, and that final mandatory step. Verify and trend. You have to rescan to confirm issues are closed and track your risk reduction over time.

Katie: Before we put this all together into a final strategy, let's clear up some common confusion. People misuse these terms all the time. Can you really clearly differentiate vulnerability scanning from the bigger practices it enables?

James: Yeah, absolutely. Think of scanning as just one tool in the toolbox. First, let's do scanning versus vulnerability assessment.

Katie: OK.

James: Scanning is the automated data collection. It's the machine output of CVEs and config issues. The assessment is the human process of interpreting that raw data.

Katie: Adding the business context.

James: Exactly. Is this server running HR payroll, that kind of thing? And then recommending what to do.

Katie: And then the big one, scanning versus penetration testing. That's where most people get tripped up.

James: A key distinction. Scanning is passive. It looks for known flaws. Pen testing is active and human driven.

Katie: So a pen tester is actually trying to break in.

James: They use exploitation to validate the real world impact. They'll try to chain vulnerabilities together. For example, a DAS scan might find a SQL injection flaw. OK. A penetration tester would then actively exploit that flaw to, say, steal mock data, proving the critical impact and validating the risk.

Katie: So scanning provides the hygiene baseline and the pen test validates the worst case scenario. Got it. Here's where it gets really interesting. Let's talk about the common operational challenges and the specific fixes this guide recommends. These are the aha moments.

James: Let's start with the one you mentioned, false positives and alert fatigue. If every CIS admin gets thousands of high priority tickets, they just start ignoring them.

Katie: It's just noise.

James: The fix is operational. You mandate credentialed scans for accuracy and you leverage those KEV and EPSS filters to focus only on exploitable high likelihood risk.

Katie: Another massive problem is coverage gaps and shadow IT. The things you don't know about are always what get you.

James: And you close those gaps by making your inventory dynamic. You have to integrate your scanning platform with every source of truth you have.

Katie: Your CMDBs, cloud APIs?

James: Cloud APIs, EDR systems, MDM solutions. If an asset registers itself anywhere, it has to automatically get added to the scan scope.

Katie: The scan scope should never be static. That makes perfect sense. But what about the risk of the scan itself causing problems, that scan-induced instability, especially with fragile OT systems?

James: This is a critical safety issue. The fix is threefold. First, for standard IT, you use safe checks and rate limiting.

Katie: Okay.

James: Second, for those sensitive systems like OT or IoT devices, you must use passive discovery.

Katie: So you're just listening to network traffic instead of actively poking at the device.

James: Exactly. Active scanning could disrupt operations or break a physical process. You have to adapt the tool to the environment.

Katie: And the final chronic issue, the process breakdown between security and IT.

James: the ultimate handoff failure.

Katie: Security finds the problems, but IT is just overwhelmed and they fix them too slowly. How do we build that bridge?

James: The fixes have to be structural and mandatory. You formalize strict SLA service level agreements for how quickly things get fixed.

Katie: You put timers on it.

James: You have to. And you automate ticket creation right into the IT system. No more spreadsheets. And most critically, you make verification via a rescan mandatory for ticket closure. Without that forced verification, the whole process just collapses.

Katie: Okay, let's synthesize all of this into a strategy guide for our listener. If you're redesigning your program for 2025, what are the non-negotiable best practices?

James: We'll start at the foundation. Make asset inventory your control zero.

Katie: You absolutely cannot manage risk for assets you cannot see.

James: It means continuous syncing with everything, cloud providers, EDR, network tools, to make sure you are constantly measuring both the breadth and depth of your coverage.

Katie: And if we connect this back to the bigger picture,

James: The strategy guide is really clear. You have to move away from outdated annual or quarterly internal standing. Quarterly only satisfies external compliance. It's totally insufficient for internal assets where the bulk of the risk really sits.

Katie: So what should the frequency be?

James: It has to increase significantly. You should be aiming for continuous scanning. That might mean weekly or monthly scans for endpoints, and daily scans for your critical high-change servers. Attackers aren't waiting for your quarterly report.

Katie: And when you prioritize, you have to lock into that multi-signal approach. You can't just look at the CVSS score by itself.

James: Right. You have to combine four things. Severity from CVSS v4, likelihood from EPSS, that reality check from the Sysicom AV catalog, and crucially, your internal business context.

Katie: The criticality of the asset. A 9.8 on a test server isn't the same as an 8.0 on your domain controller.

James: Not even close. And remember, the whole point of this is closure. The strategy requires tying your scan results directly to the patch management workflow. You plan the patch, you test it, you deploy it.

Katie: And then you immediately verify it with a rescan.

James: The process is never, ever complete until that rescan confirms it's closed.

Katie: And a final practical tip, be cloud and OT aware.

James: Use cloud-native tools that use APIs for visibility. And like we said, use passive discovery for fragile environments. Do not treat a high-speed production line controller the same way you treat a marketing laptop.

Katie: So what does this all mean? I mean, it's clear that vulnerability scanning is absolutely foundational. It's how you discover what you own and how you feed all your patching and hardening efforts. But the true modern value is combining that continuous high fidelity coverage from credentialed scanning with risk-based prioritization using all three signals, CVSS, EPSS, and KV. That's what turns raw data into high priority action.

James: And if you look just beyond the immediate process, the whole field is evolving past just finding CVEs. It's moving toward this broader concept called exposure management or CTEM. Exposure management. Yeah, it means expanding the focus to include misconfigurations, identity issues, the entire external attack surface, anything that increases your organizational risk, not just missing patches. That holistic view is the future.

Katie: that leads perfectly into a final thought for you to consider. If we agree asset inventory is control zero and we now have all this amazing data, CVSS, V4, EPSS, KEV to target only the most exploitable issues.

James: The tools are there.

Katie: They are. So what is the actual impact on your mean time to remediate your MTPR if you make a policy decision to only focus on those KEV listed vulnerabilities that also score high on EPSS?

James: I mean, is it finally time to stop treating every single high severity finding equally?

Katie: Especially when we know, what, statistically, 90% of them will never even be exploited.

James: It's a powerful question.

Katie: A powerful question worth exploring. We hope this deep dive gives you the knowledge to apply that risk-based prioritization wisely. Thanks for joining us.